I recently wrote a blog post entitled Social Platforms: Problem Child or Fall Guy. In this post, I suggested that social platforms host misinformation public service announcements in users’ news feeds to help prevent misinformation spread. I received this question to my suggestion, “who should drive these PSA campaigns?” My instant answer was it should be the social media platform’s responsibility to drive this type of PSA campaign. The reality is it is just not that simple.

Social media platforms have been accused of censoring dissenting voices and driving misinformation PSAs could create additional accusations. I still like my idea because it helps educate the public on preventing the spread of misinformation on social media. It is estimated that social media users will reach nearly 4 billion users by 2022. Offering misinformation education on all social platforms could reach numerous people and positively impact the problem. However, social media platforms cannot be the only player in the game to fix the misinformation crisis.

Misinformation can be traced back centuries ago. It goes as far back as the invention of the Penny Press. History shows misinformation has been used as a tool for profit, power, and prestige. Misinformation was built into the systematic framework we know today. We cannot assume that Big Tech and public education only will fix this deep-rooted problem. Systems must change. The typical top-down or bottom-up hierarchal communication strategy will not work in this instance either.

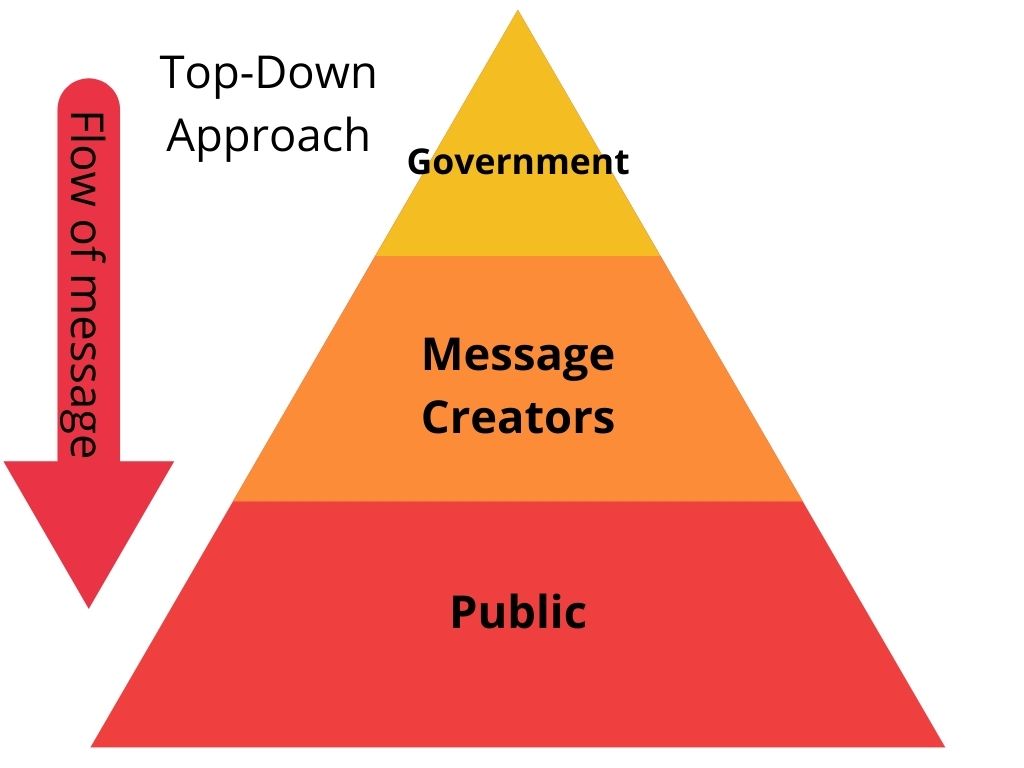

In a top-down approach, the top is the government, and down to the bottom is the general public. If a proposed solution to the misinformation challenge comes from the government, it is not unimaginable they will be accused of tyranny or authoritarianism. In a country as divided as it currently is, conspiracy theorists could create even more misinformation causing even more public distrust of government. A government-based solution could cause an even bigger misinformation problem.

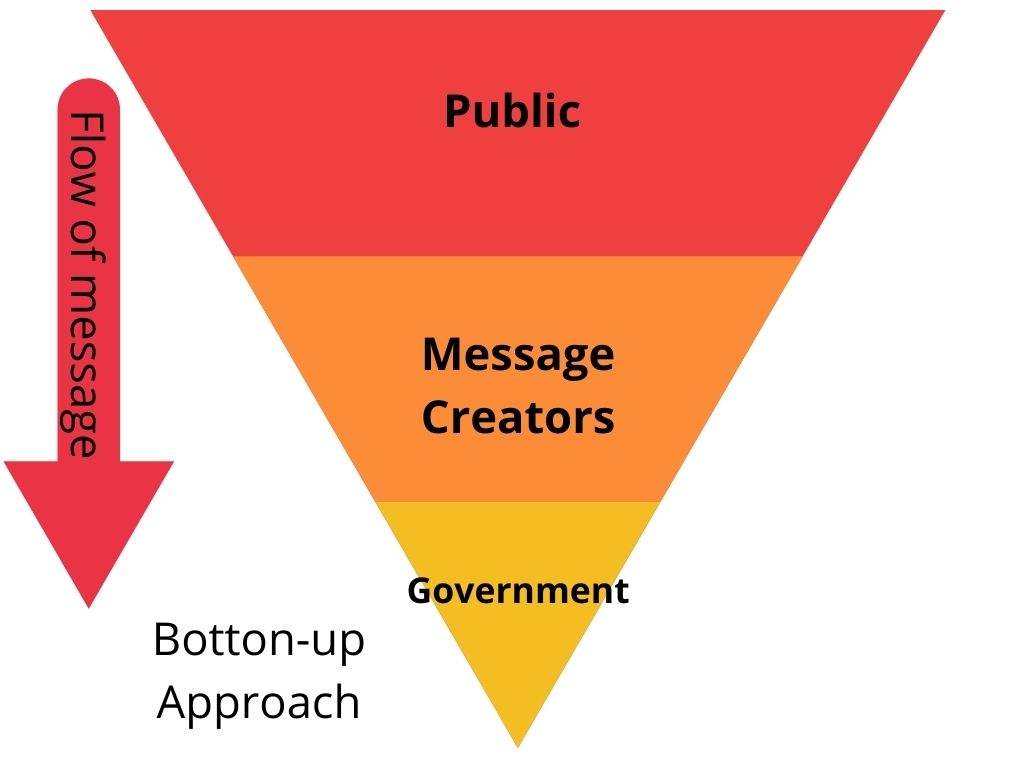

In a bottom-up approach, the solution will then need to come from the people. In a country divided, could the public agree on a solution? In a poll taken in late 2021, 95% of the people polled agree misinformation is a big problem. With so many people agreeing it is a problem, the public pushing for a solution is possible. Unfortunately, only 20% of the people polled worry about spreading it. Unless the majority of people are concerned about spreading misinformation, a bottom-up approach may be unsuccessful.

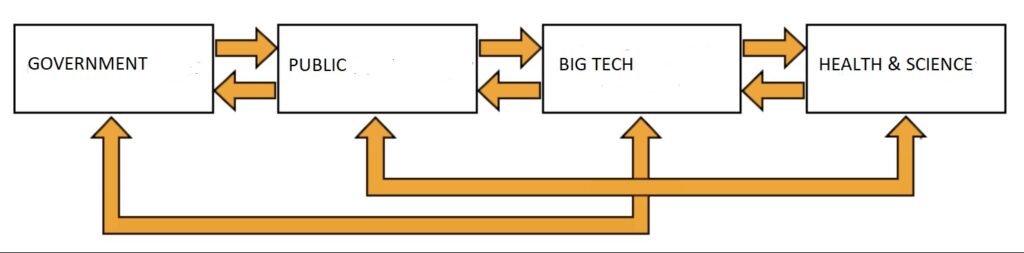

I am starting to believe the only way to win the battle of misinformation is through a collaborative effort. A collaborative effort has to involve everyone, and by everyone, I mean the public, corporate America, and all branches of government. The battle against misinformation must be a matter of social responsibility.

“Social responsibility is a moral obligation on a company or an individual to take decisions or actions that is in favor and useful to society”

—ByJu’s

For maximum societal involvement, we need to challenge traditional hierarchal ideology. We need to frame this social responsibility movement in linear terms. The lateral structure concept is often synonymous with corporate organizational charts. This type of organizational structure gives departments the ability to work together to reach a shared goal. The linear system removes departments operating as separate units, typically found in top-down organizational charts. We can use the lateral organizational chart and apply it to disseminating consistent messaging through each segment.

For a lateral communication system to succeed, each department must deliver consistent messaging surrounding the prevention of misinformation spread. We can take a play out of the conspiracy theorist’s playbook to do this. Repeat the same information over and over to make it believable. The more the same message is delivered to the public from various sources, the public will begin to repeat the mantra. We can put a positive spin on the illusionary truth effect. We can replace the idea of a repeated lie becoming a truth with a repeated fact becoming a cultural norm.

The development of consistent messaging is where the challenge lies. Several questions come to mind:

- Where does the messaging come from?

- Who develops the content?

- Who pays for the campaign?

- How is the messaging delivered?

A solution to those questions could be to establish a misinformation task force. This task force should comprise of seven people with expertise in misinformation and digital literacy, a digital communication and design expert, a representative for public health & safety, a technology professional, an equal rights specialist, and a government official. Such a task force must be a well-rounded, non-partisan representation of the people. The task force will be given the mission to develop a public service campaign with the goal of curbing the spread of misinformation.

The question of “who will pay for this task force and how is the messaging delivered?” requires some creativity. The answers, in part, have to do with social responsibility. The spread of misinformation is a matter of social responsibility that everyone has a role in its prevention. The task force’s time and effort can be a charitable contribution to this social responsibility cause. I believe the best delivery method is through social media. The social media platforms’ contribution to this social responsibility cause will be to host the content and place it into users’ news feeds.

The government’s contribution to this social responsibility cause is in their non-partisan support of these efforts and committing to delivering the same messaging when required. The public’s contribution is in remembering the PSAs message and #TakeCareBeforeYouShare.

I know this idea may be unrealistic, but I am a solution-based visionary and a dreamer for a united world. 🥰❤️🌍

One thought on “A Matter of Social Responsibility”